Data Marketplace

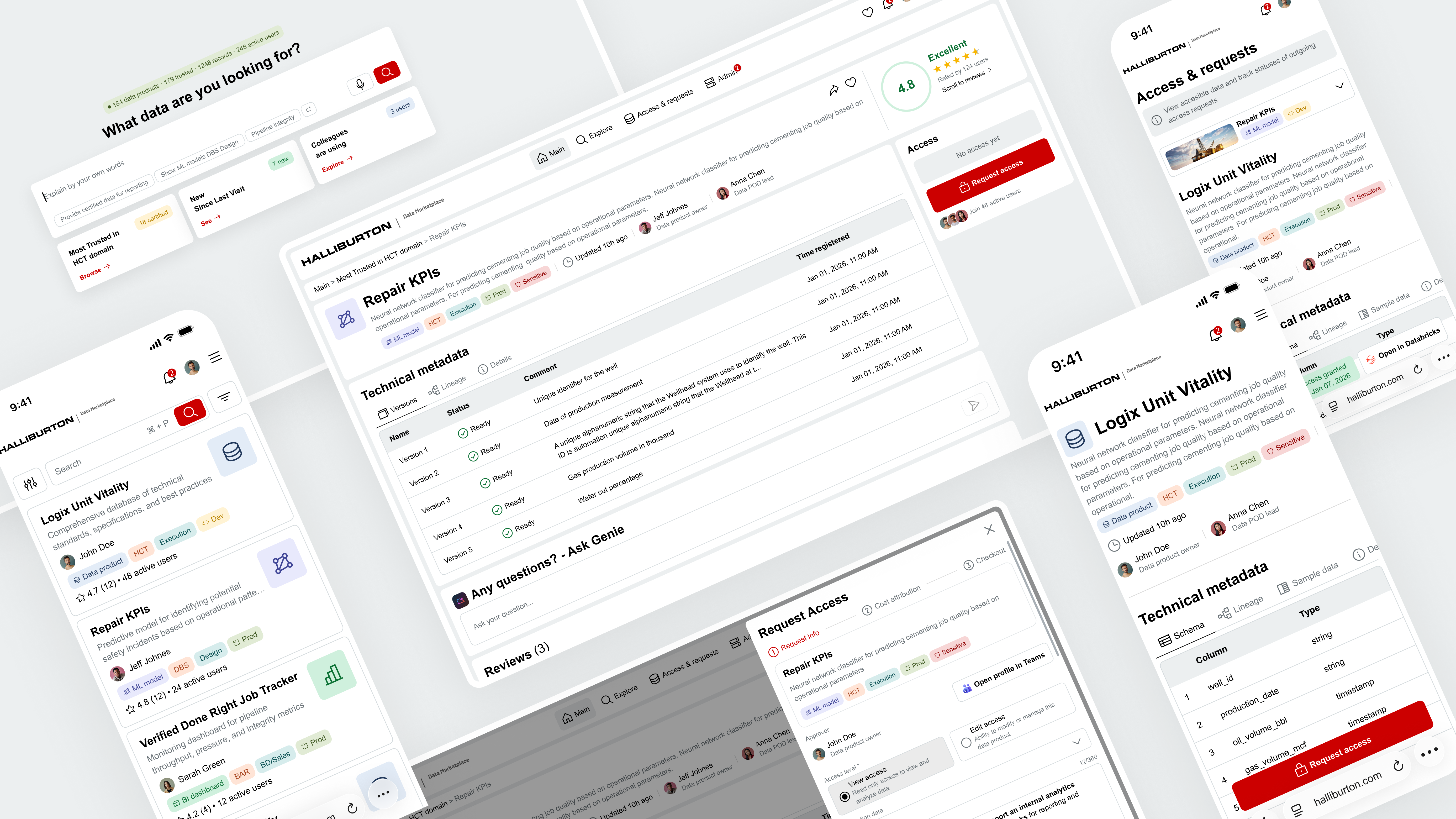

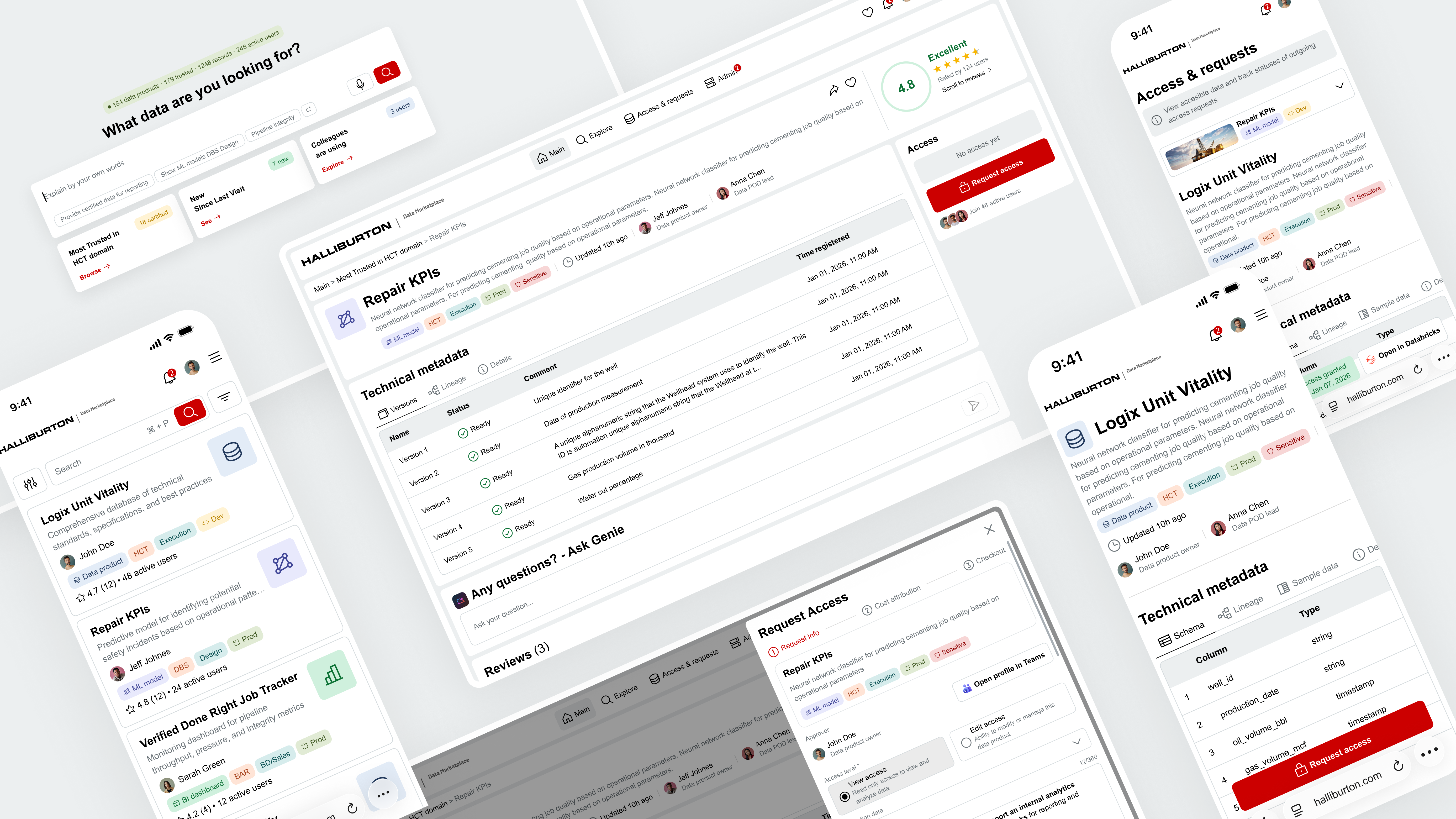

Shipping Halliburton's first unified data platform through an AI-native design-to-code pipeline.

The first tool at Halliburton where engineers can find, trust, and access any data asset in minutes.

Shipping Halliburton's first unified data platform through an AI-native design-to-code pipeline.

The first tool at Halliburton where engineers can find, trust, and access any data asset in minutes.

01 — Problem & Context

Problem

Data assets live across disconnected systems with inconsistent metadata and unclear ownership.

Goal

A unified marketplace for curated data, reports, models, and AI agents — part of Halliburton's Data Ribbon.

Success

Engineers find, validate, and request access to any data asset in under a few minutes.

02 — Process

1.0

Discovery

Aligned with the Technology Director and AI lead on vision, then defined two product phases — MVP and Trust/AI — so the design system could scale without rework.

2 strategies · 1 foundation2.0

Research

Mapped 4 persona groups, 5 user flows, user journey maps, and the information architecture that later powered the MCP hand-off.

4 personas · 5 flows · IA3.0

Prototype & Design System

Started with AI-generated prototypes to validate structure fast, then built a scalable design system with reusable tokens and components.

AI prototype · Figma system4.0

Validation

Two rounds of testing with real users — Data POD Leads and Product Owners. Caught blind spots before a single line of code was written.

2 rounds · 10 users5.0

Hand-off to Dev

Connected dev team to Figma through an MCP server. Developers pulled specs, tokens, and components directly into their IDE — no screenshots, no outdated docs.

Figma MCP · live specs6.0

Post-launch Research

Tracked adoption, task success, and friction points after launch. The platform keeps evolving based on real usage data.

Analytics · iteration03 — UX Strategy

UX strategy is a long-term plan that connects user insights, product vision, and business objectives into a coherent direction for designing and improving the user experience.

Phase 1 established the foundation — a single marketplace where engineers can find, trust, and access any data asset. The focus was on structure, clarity, and core workflows before layering in AI and social.

| Vision | Provide a user-friendly means of accessing curated, trusted data, reports, models, and AI Agents that are a part of Halliburton's Data Ribbon. |

| Problem | Users cannot efficiently work with data because it is difficult to find, difficult to access, and not easy to compare across multiple sources. |

| Success looks like | Data engineers use the dashboard daily as the primary entry point to find, validate, request access, and consume data assets within minutes — not days or weeks. |

Data Scientist / Engineer

Browse data products, fast and relevant search, preview data, request & obtain access quickly.

Business User

Find existing reports across BI tools that answer questions from trusted data.

Data Product Owner

Manage data product info, approve/reject accesses, define level of access.

Data POD Lead

Oversee data products across teams, monitor quality and access patterns.

What I delivered end-to-end on this project:

Phase 2 transforms the marketplace from a browsing tool into a trust-driven, AI-powered discovery platform. Users describe what they need in plain language, see ranked results they can trust, and make access decisions with confidence.

| Vision | Transform the Data Marketplace from a browsing tool into an intelligent, trust-driven data discovery platform powered by AI. |

| Problem | Users can find data but cannot judge if it's reliable, relevant, or safe to use. Discovery is manual, trust signals are absent, and social knowledge is invisible. |

| Success looks like | Engineers and business users describe what they need in plain language, receive ranked trusted results in seconds, and make access decisions confidently. |

| Phase 1 → 2 | The existing browse experience moves to a secondary role. A new AI-powered entry point takes its place — built around intent rather than navigation. |

04 — Research

Deep research phase to map out who uses the platform, how they interact with it, and where the friction points are. 4 persona groups, detailed user flows, and end-to-end journey maps shaped every design decision.

Users

Data Scientist / Engineer

| Who | Technical specialist working with Python, ML models, and data integrations. |

| Pain | Hard to find datasets across sources; long approval cycles; unclear ownership. |

Business User

| Who | Analysts, PMs, and business stakeholders using BI tools. |

| Pain | Too much raw data, too few curated business-ready reports; hard to trust freshness. |

Creators & Approvers

Data Product Owner

| Who | Product manager responsible for governance and lifecycle of data products. |

| Pain | Fragmented ownership; too many manual approval steps; slow onboarding. |

Data POD Lead

| Who | Team/Tech Lead for a data engineering POD or domain. |

| Pain | Heavy manual documentation work; poor visibility into who uses their products. |

05 — Prototype & Design System

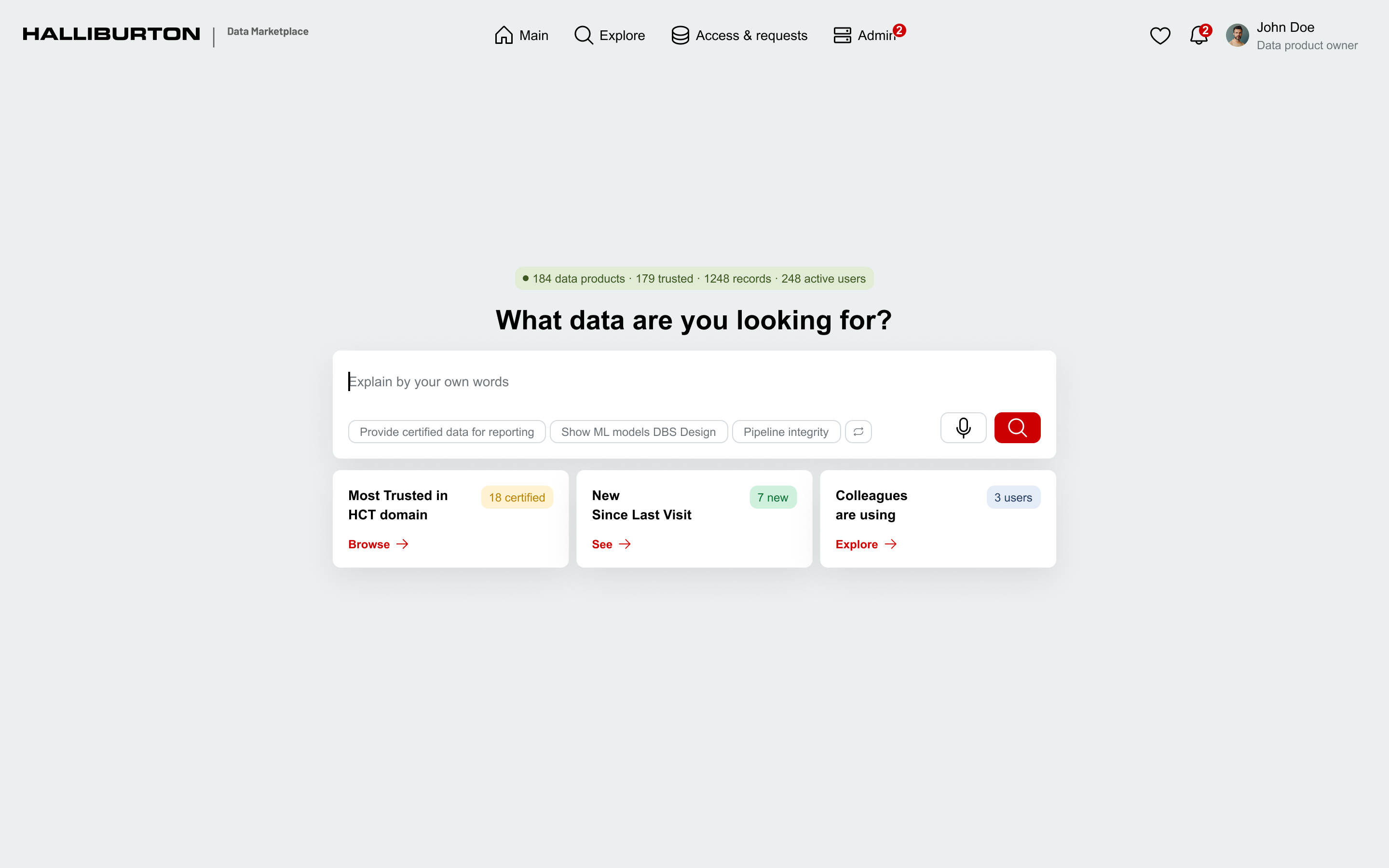

Instead of jumping straight into pixel-perfect mockups, I started with an AI-based prototype to quickly test concepts, layouts, and interaction patterns. This let me brainstorm and validate what works and what doesn't — before investing time in high-fidelity design.

Once the structure was proven, I built a scalable design system with reusable components, tokens, and patterns — then created the final mockups for the dev team.

Used AI tools to rapidly generate and iterate on layout concepts, testing different approaches to search, filtering, data cards, and access flows. This phase was about speed and exploration — finding the right direction before committing to a design system.

Multiple iterations across layout, search, filtering, and access flow concepts — tested structurally before any high-fidelity work began.

AI-powered home page with natural language search and personalized recommendations

One system, two themes, zero duplication. Every color in the product traces through three layers — primitives at the base, semantic tokens that swap between light and dark modes, and component tokens that apply them consistently.

~158 primitive · ~45 semantic · ~459 component tokens across 2 themes. Showing a selection of key chains.

06 — Validation

Before moving to development, I ran two structured feedback sessions with the primary user groups — validating designs, aligning on roles and workflows, and catching blind spots early.

Design review & feedback session with Data POD Leads — the technical owners who create and maintain data products on the internal data platform. Focused on validating roles, publishing workflows, and access management.

Key takeaways

Design review & feedback session with Data Product Owners — the business owners of data products, responsible for final approval and publication decisions. Focused on publication flow, access governance, and role alignment.

Key takeaways

07 — Hand-off to Dev

Traditional hand-off means screenshots, redlines, and a PDF that's out of date the moment you save it. On this project, I tried something else: connect developers directly to the Figma design system through MCP — Model Context Protocol — so they could pull component specs, tokens, and interaction states from their IDE in real time. No more guessing from static docs.

Traditional vs MCP Pipeline

Traditional hand-off

MCP pipeline

How it flows

What this looks like in code

Every prop, every style, every token — traced back to a Figma variable. Update the variable in Figma, and the next sync updates the code.

Generic example — structure shown, real component names under NDA.

| Connection | MCP server connected to Figma file, exposing the design system as structured data that dev tools could consume in real time. |

| Components | Developers accessed component specs, props, variants, and usage guidelines directly from their IDE — no context switching to Figma. |

| Tokens | Design tokens (colors, typography, spacing, elevation) synced automatically — any update in Figma propagated to code. |

| Result | Significantly reduced back-and-forth between design and dev. Components were built accurately on the first pass, matching the design system 1:1. |

Result: components delivered significantly faster than traditional handoff cycles — fewer iterations, higher first-pass accuracy.

| Structured Figma file | MCP reads layer names, frame structure, and component hierarchy. A well-organized Figma file with clear section naming made the design system legible to the AI — messy files produce messy output. |

| Token discipline | Three-tier token architecture (primitives → semantic → component) gave MCP a stable interface. Any token change in Figma propagated cleanly to code. |

| Shared vocabulary | Dev and design aligned on component names, prop names, and variant terminology up front. Naming consistency turned out to be the biggest unlock. |

| Iteration speed | Fewer back-and-forth cycles meant design could iterate on edge cases while dev built the core in parallel. |

08 — Post-launch Research

The platform is currently in active development and heading toward launch. Rather than waiting until go-live to figure out how to measure success, I designed the post-launch research program upfront — combining traditional analytics, modern AI-powered tools, and continuous user feedback.

| Adoption | Active users, onboarding completion rates, and frequency of return visits across all persona groups. |

| Task success | Time-to-find for datasets, search-to-access conversion, and first-attempt success rate for access requests. |

| Satisfaction | In-product micro-surveys and follow-up interviews triggered by key user actions. |

| Pain points | Friction identified through AI-summarized session replays, support tickets, and heatmap analysis. |

| Analytics copilot | Natural language queries across usage data to spot trends and cohorts faster than manual dashboards. |

| AI session replay | LLM-generated insights from user sessions instead of watching hundreds of recordings manually. |

| Continuous interviews | Weekly short conversations with real users, built into the process rather than run as one-off studies. |

| Feedback synthesis | Combining survey responses, support tickets, and interviews into themed insights automatically via LLM synthesis. |

| Listen | Collect feedback through multiple channels — surveys, session replays, interviews, and support tickets. |

| Synthesize | Theme the findings and prioritize by impact on user tasks and business goals. |

| Ship | Iterate on the most critical friction points in fast, focused cycles. |

| Validate | Re-measure to confirm the change actually moved the metric. |

09 — Outcome

The platform is built, validated with real users, and handed off for final development. Launch is weeks away.

What's already in place: a three-tier design system with dev-integrated MCP pipeline, a two-phase UX strategy, two rounds of stakeholder validation, and a tested Figma-to-code workflow that's already producing components 1:1 with the design system.

10 — Reflection

What I'd do differently

Involve data governance stakeholders earlier in the process. Their requirements only became clear mid-project, which meant reworking parts of the access request flow that were already in motion.

What this project taught me

Leading a project end-to-end as the only designer taught me that seniority isn't about headcount — it's about owning decisions across the whole stack. And AI in the design process isn't magic, it's leverage — it speeds up the parts you already understand well, and it's useless for the parts you don't. The best outcomes came when I knew exactly what I wanted before asking for it.

What I'm taking forward

The Figma + MCP pipeline is going into every project from here. Once you've shipped components 1:1 with the design system on the first pass, traditional hand-off feels broken.